We’re hard at work on our project called New Mathematics and Software for Agent-Based models. It’s impossible to explain everything we’re doing while it’s happening. But I want to record some of our work. So please pardon how I skim through a bunch of already known ideas in my desperate attempt to quickly reach the main point. I’ll try to make up for this by giving lots of references.

Today I’ll talk about an interesting class of models we have developed together with Sean Wu. We call them ‘stochastic C-set rewriting systems’. They’re just part of our overall framework, but they’re an important part.

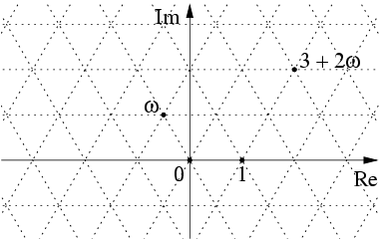

In this sort of model time is continuous, the state of the world is described by discrete data, and the state changes stochastically at discrete moments in time. All those features are already present in the class of models I described in Part 7. But today’s models are far more general, because the state of the world is described in a more general way! Now the state of the world at any moment of time is a C-set: a functor

for some fixed finitely presented category C to the category of sets.

C-sets are a flexible generalization of directed graphs. For example, a thing like this is a C-set for an appropriate choice of C:

There are also C-sets that look even less like graphs.

C-sets have been implemented in AlgebraicJulia, a software framework for doing scientific computation with categories. To learn more, start here:

• Evan Patterson, Graphs and C-sets I: What is a graph?, AlgebraicJulia Blog, 1 September 2020.

There’s a lot more on this blog explaining things you can do with C-sets, and how they’re implemented in AlgebraicJulia. We plan to take advantage of all this stuff!

In particular, we’ll use ‘double pushout rewriting’ to specify rules for how a C-set can change with time. If you’re not familiar with this concept, start here:

• nLab, Double pushout rewriting.

This concept is well-understood (by those who understand it well), so I’ll just roughly sketch it. In double pushout rewriting for C-sets, a rewrite rule is a diagram of C-sets

To apply this rewrite rule to a C-set S, we find inside that C-set an instance of the pattern L, called a ‘match’, and replace it with the pattern R. These ‘patterns’ are themselves C-sets. The C-set I can be thought of as the common part of L and I. The maps and

tell us how this common part fits into L and R.

Note that in this incredibly sketchy explanation I am already starting to use maps between C-sets! Indeed, for each category C there’s a category called with:

• functors as objects: we call these C-sets;

• natural transformations between such functors as morphisms: we call these C-set maps.

This sort of category has been intensively studied for many decades, and there’s a huge amount we can do with them:

• nLab, Category of presheaves.

I used C-set maps in a couple of places above. First, the arrows here

are C-set maps. For slightly technical reasons we demand that be monic: that’s why I drew it with a hooked arrow. Second, I introduced the term ‘match’ without defining it. But we can define it: a match of L to a C-set S is simply a C-set map

And now for some good news: Kris Brown has already implemented double pushout rewriting for C-sets in AlgebraicJulia:

• Github, AlgebraicRewriting.jl.

Stochastic C-set rewriting systems

Now comes the main idea I want to explain.

A stochastic C-set rewriting system consists of:

1) a category C

2) a finite collection of rewrite rules

3) for each rewrite rule in our collection, a timer

This is a stochastic map

That’s all.

What does this do for us? First, it means that for each choice of rewrite rule in our collection, and for each so-called start time

we get a probability measure

on

Let’s write to mean a randomly chosen element of

distributed according to the probability measure

We call

the wait time, because it says how long after time

we should wait until we apply the rewrite rule

The time

is called the rewrite time.

In what follows, I’ll always assume these randomly chosen numbers are stochastically independent—even if we reuse the same timer repeatedly for different tasks.

Running a stochastic C-set rewriting system

Okay, so how do we actually use this for modeling? How do we ‘run’ a context-independent stochastic C-set rewriting system? I’ll sketch it out.

The idea is that at any time the state of the world is some C-set, say

If you give me the initial state of the world

the stochastic C-set rewriting system will tell you how to compute the state of the world at all later times. But this computation involves randomness.

Here’s how it works:

We start at We look for all matches to patterns

in the initial state

For each match we compute a wait time

and then the rewrite time

but right now

We make a table of all the matches and their rewrite times.

The smallest of the rewrite times in our table, say is the first time the state of the world can change. We change it by applying the rewrite rule

to the state of the world

When we do this, we cross off the rewrite time

and its corresponding match from our table.

More generally, suppose is any time when the state of the world changes. It will have changed by applying some rewrite rule

to the previous state of the world, giving some new C-set

When this happens, new matches can appear, and existing matches can disappear. So we do this:

1) For each existing match that disappears, we cross off that match and its rewrite time from our table.

2) For each new match that appears, say one involving the rewrite rule we add that match and its rewrite time

to our table.

We then wait until the smallest rewrite time in our table, say At that time, we apply the corresponding rewrite rule to the state

, getting some new C-set

We also cross off the rewrite time

and its corresponding match from our table.

Then just keep doing the loop.

Subtleties

A lot of the subtleties in this formalism involve our use of timers.

For example, I computed wait times using a timer which is a stochastic map

The dependence on here means the wait time can depend on when we start the timer. And the fact that this stochastic map takes values in

means the wait time can be infinite. This is a way of letting rewrite rules have a probability < 1 of ever being applied. If you don't like these features you can easily limit the formalism to avoid them.

The more serious subtleties involve whether and how to change wait times as the state of the world changes. For example, we can imagine more general timers that explicitly depend on the current state of the world as well as the time However, in this case I am confused about how we should update our table of wait times as the state of the world changes. So I decided to postpone discussing this generalization!